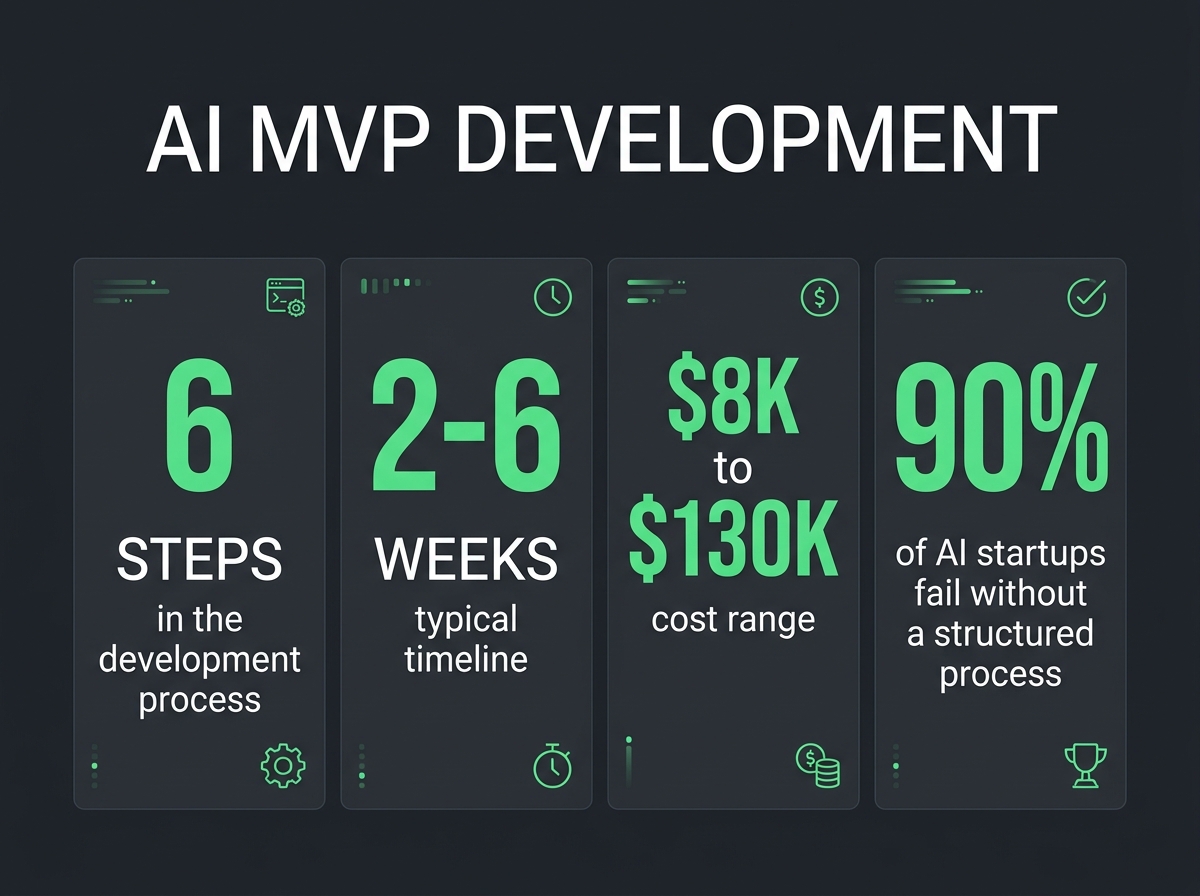

AI captured $202.3 billion in venture funding in 2025, a 75% year-over-year increase (Crunchbase, 2025). Yet 90% of AI startups fail within their first year (AI4SP, 2025). The gap between those two numbers is where most founders lose their money: they raise capital, skip the validation step, and build a product nobody needs at a scale nobody asked for.

The founders who survive do something different. They build an AI MVP first, a focused, functional AI product that proves one hypothesis before burning through runway on features, infrastructure, and hiring. Jasper launched its MVP in 30 days with a few content templates powered by GPT-3 and went on to reach $80 million ARR. PDF.ai grew past $60,000 MRR after being acquired at the MVP stage. The pattern is consistent: start small, validate fast, scale what works.

This guide is the definitive resource on AI MVP development. It covers how AI MVPs differ from traditional MVPs, a six-step process for building one, real tech stack recommendations with named tools, honest cost data, concrete examples, and the mistakes that kill most AI startups before they find product-market fit. If you are a non-technical founder building an AI product, or a technical founder who wants a structured framework for getting from idea to validated product, this is where you start.

Table of Contents

- What Is AI MVP Development?

- Why Build an AI MVP First

- The AI MVP Development Process (6 Steps)

- AI MVP Tech Stack Recommendations

- AI MVP Cost Factors

- Common AI MVP Examples

- How to Choose an AI MVP Development Partner

- AI MVP Mistakes to Avoid

- FAQ

What Is AI MVP Development?

AI MVP development is the process of building a minimum viable AI product, typically an LLM-powered application, data pipeline, or intelligent automation, to validate a startup’s core AI hypothesis before committing to full-scale development. The goal is not to build a complete AI platform. The goal is to answer one question: does this AI capability solve a real problem that real users will pay for?

That definition matters because it separates AI MVP development from two things founders confuse it with: building a demo and building a product.

A demo shows that AI can do something impressive. A product delivers that capability to users at scale with reliability, error handling, and a business model. An AI MVP sits between them. Functional enough for real users to get real value, lean enough to build in weeks instead of months.

How AI MVPs Differ from Traditional MVPs

The MVP concept comes from Eric Ries and the Lean Startup methodology. Build the simplest version of your product, get it in front of users, learn from their behavior, iterate. That framework works for traditional software. For AI products, three differences change the equation.

The AI layer adds technical uncertainty. A traditional MVP for a project management tool has predictable behavior. If a user creates a task, the task appears. An AI MVP for a document analysis tool has probabilistic behavior. The model might misclassify a contract clause, hallucinate a citation, or produce inconsistent outputs across identical inputs. Your MVP must account for this uncertainty. That means building evaluation frameworks, guardrails, and fallback mechanisms into the minimum viable version, not after it.

Data requirements front-load the work. Traditional MVPs can launch with an empty database and grow data as users arrive. AI MVPs often need training data, evaluation datasets, or retrieval corpora before the product can function at all. A RAG-based MVP needs a curated knowledge base. A classification MVP needs labeled examples. This front-loading changes your timeline and your budget.

API costs create variable unit economics. Traditional SaaS has near-zero marginal cost per user. AI products built on model APIs (OpenAI, Anthropic, Google) have per-request costs that scale with usage. A customer who sends 500 queries per day costs you meaningfully more than one who sends 5. Your MVP must validate not just whether users want the product, but whether the unit economics work at the price point users will accept.

These three differences (technical uncertainty, data dependencies, and variable costs) are why generic MVP advice fails for AI products. How to build an AI MVP requires a different playbook than building a traditional software MVP.

Why Build an AI MVP First

Skip the MVP phase and you join the 90% of AI startups that fail. Here is specifically what an AI MVP validates that nothing else can.

Validate AI Feasibility Before Scaling

The most expensive mistake in AI development is building production infrastructure for an AI capability that does not work reliably enough for real users. We have seen this pattern repeatedly at Downshift: a founder raises $500,000, hires a team, spends six months building, and discovers that their AI model’s accuracy drops from 95% on test data to 73% on real-world inputs. The product is technically functional and practically useless.

An AI MVP tests feasibility with real users and real data in weeks, not months. You discover the accuracy gap, the hallucination frequency, the edge cases, and the latency constraints before you have spent your runway. The cost of an AI MVP, typically $15,000 to $80,000 (Biz4Group, 2025), is a fraction of the cost of rebuilding a product whose AI layer does not perform.

Reduce Time-to-Market

AI-assisted development tools have compressed MVP timelines dramatically. Traditional MVP development takes 3 to 9 months. AI MVPs built with modern tooling and API-first architectures ship in 2 to 6 weeks (Ideas2It, 2026). That speed advantage compounds: founders who ship MVPs within 60 days show higher fundraising success because they demonstrate velocity and validated demand, not just a pitch deck.

The compression comes from two sources. First, foundation model APIs (OpenAI, Anthropic, Google) eliminate the need to train custom models for most MVP use cases. You can build a functional AI chatbot, document processor, or recommendation engine using API calls instead of months of model training. Second, AI coding assistants accelerate the application development layer itself, the frontend, backend, and integration code that wraps the AI capability into a usable product.

Attract Investors with a Working Prototype

AI captured nearly 50% of all global venture funding in 2025 (Crunchbase). Investors are writing large checks for AI companies, $202.3 billion across the sector. But they are also getting more selective. The era of funding AI pitch decks without working products is ending.

A working AI MVP changes the investor conversation from “We plan to build an AI product” to “Here is our AI product. Here is what users do with it. Here is the data on retention, accuracy, and unit economics.” That shift moves you from the 90% of AI startups that fail to the cohort that gets funded, because you have replaced assumptions with evidence.

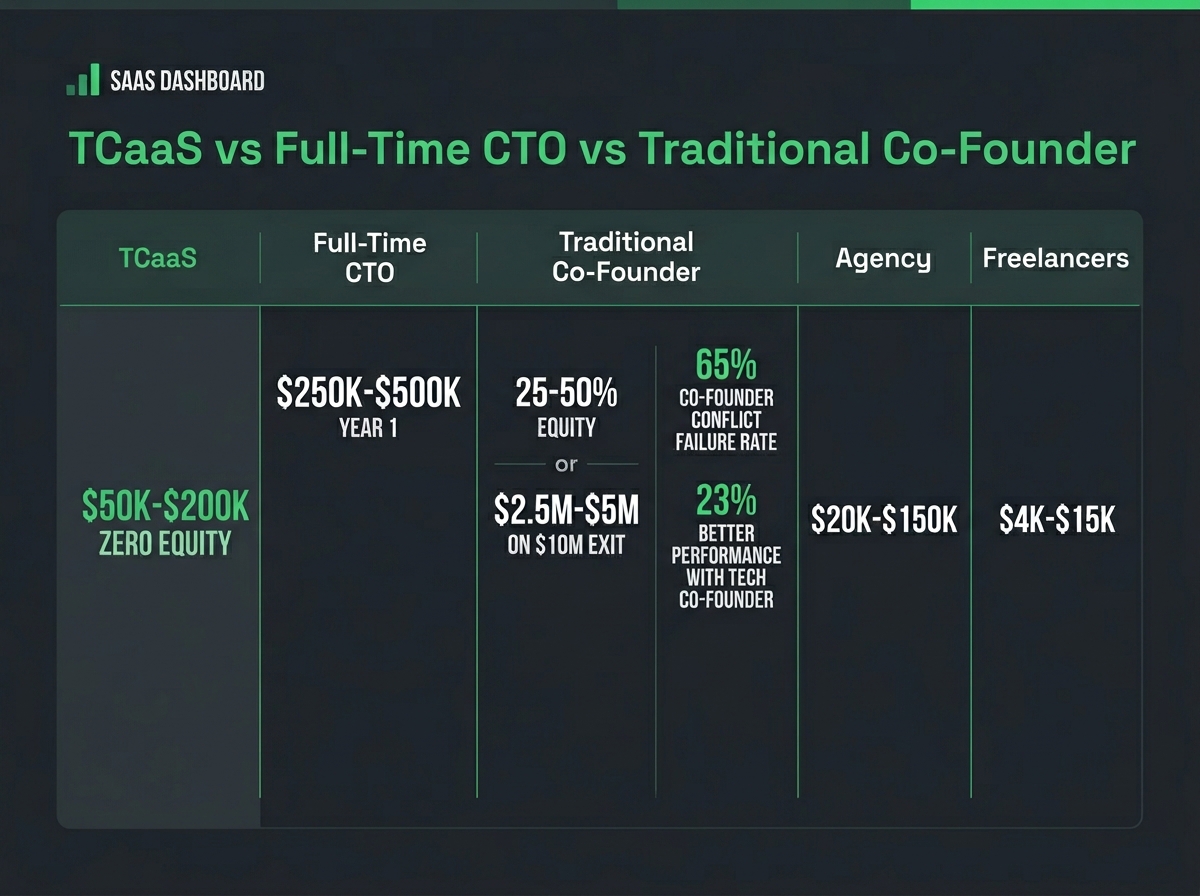

Investors at the pre-seed and seed stage evaluate two things: can the team execute, and is there a market? An MVP answers both questions simultaneously. For non-technical founders, working with a technical co-founder service adds the technical credibility that investors require when evaluating AI ventures.

The AI MVP Development Process (6 Steps)

This is the framework we use with every AI venture at Downshift. Six steps, each with a clear deliverable. Skip a step and you create debt that compounds in every subsequent phase.

Step 1: Define the AI-Powered Value Proposition

Start with the problem, not the technology. The most common AI MVP failure we see is founders who start with “I want to build an AI product” instead of “I want to solve this specific problem, and AI is the best way to solve it.”

Your value proposition must answer three questions:

-

What specific problem does this solve? Not “helps businesses with data.” That is a category, not a problem. “Reduces the time insurance adjusters spend reviewing claims documents from 45 minutes to 3 minutes” is a problem with a measurable outcome.

-

Why is AI the right solution? If a rules-based system, a simple database query, or a well-designed form can solve the problem, AI adds complexity without value. AI earns its place when the problem involves unstructured data, natural language, pattern recognition, or decision-making that requires handling ambiguity.

-

What is the minimum AI capability that proves the value? Your MVP does not need to handle every edge case, every document type, or every language. It needs to handle the core use case well enough that users choose it over their current workflow. Define that threshold explicitly: 85% accuracy on standard claims documents, 90% relevance in retrieval results, sub-2-second response time.

Write the value proposition in one sentence: “[Product] uses [AI capability] to help [specific user] accomplish [specific outcome] [quantified improvement].”

Step 2: Select Your AI Architecture (LLM, ML, Rule-Based)

Not every AI MVP needs a large language model. The architecture decision shapes your cost, timeline, accuracy, and scalability. Choose wrong here and you build something that either costs too much to run or does not perform well enough to retain users.

LLM-based (API-first). Use when: your product processes natural language, generates content, answers questions, or requires reasoning over text. Examples: AI chatbots, document analysis tools, content generation, code assistants. Cost profile: per-token API costs, low training cost, fast to build. Timeline: 2-4 weeks for core MVP. The vast majority of AI MVPs in 2026 use this architecture because foundation model APIs handle the heavy AI work.

Traditional ML (custom models). Use when: your product needs classification, prediction, or pattern recognition on structured data. Examples: fraud detection, demand forecasting, image classification, recommendation engines. Cost profile: higher training cost, lower inference cost at scale. Timeline: 6-12 weeks due to data collection, labeling, and model training. Choose this when LLMs are overkill or when you need deterministic behavior.

RAG (Retrieval-Augmented Generation). Use when: your product needs to answer questions using a specific knowledge base. Examples: customer support bots trained on company documentation, legal research tools, medical reference assistants. This is LLM-based but adds a retrieval layer. A vector database stores your documents, and the model generates answers grounded in your specific data rather than its general training. This architecture reduces hallucination and makes outputs verifiable. Timeline: 3-6 weeks.

Rule-based with AI enhancement. Use when: most of your logic is deterministic but a few decision points benefit from AI. Examples: workflow automation with AI-powered document classification at the intake step, CRM with AI-driven lead scoring. This is the lowest-risk architecture because the AI component is modular. If it fails, the product still works. Timeline: 2-4 weeks.

Hybrid approaches. Most production AI products end up combining architectures. A document processing MVP might use an LLM for text extraction, a custom classifier for document categorization, and rules-based logic for routing. Start with the simplest architecture that proves your value proposition, then add complexity as you validate.

Step 3: Choose Your Tech Stack

Your tech stack for an AI MVP should optimize for speed-to-market and iteration speed, not for theoretical scale. You are building to validate, not to serve a million users. Here are specific, named recommendations based on what we use in production.

Frontend: Next.js or React with TypeScript. Both have massive ecosystems, strong community support, and deploy to Vercel or similar platforms in minutes. Next.js gives you server-side rendering for SEO-sensitive pages. For mobile-first MVPs, React Native or Flutter. For the fastest possible path to a working interface, v0.dev or Bolt can generate functional UI components from descriptions.

Backend and API: Node.js with Express or Fastify for simplicity and speed. Python with FastAPI if your AI layer requires Python-native ML libraries. For startups that need both (a TypeScript frontend team and Python AI work), build the AI layer as a separate microservice behind an API.

AI/ML layer: For LLM-based products, start with OpenAI’s API (GPT-4o) or Anthropic’s API (Claude). Both offer reliable, well-documented APIs with strong developer ecosystems. Use LangChain or LlamaIndex as your orchestration framework. LangChain for complex agent workflows, LlamaIndex for RAG applications specifically (Second Talent, 2026). For vector storage (RAG), Pinecone, Weaviate, or pgvector (PostgreSQL extension) depending on whether you want managed infrastructure or self-hosted. For custom ML models, use Hugging Face Transformers with PyTorch.

Infrastructure: PostgreSQL for your application database. LLMs understand SQL far better than NoSQL, which matters when you are building AI features that query your data (Neon or Supabase for managed PostgreSQL). Redis for caching and rate limiting. Vercel or Railway for deployment. AWS, GCP, or Azure for any GPU-intensive workloads.

For a deeper breakdown of tech stack decisions for AI startups, see our best tech stack for AI startups guide.

| Layer | Recommended Tools | When to Use |

|---|---|---|

| Frontend | Next.js, React, TypeScript | Web apps, dashboards, SaaS interfaces |

| Mobile | React Native, Flutter | Mobile-first AI products |

| Backend | Node.js (Fastify), Python (FastAPI) | API layer, business logic |

| LLM API | OpenAI (GPT-4o), Anthropic (Claude) | Natural language, generation, reasoning |

| Orchestration | LangChain, LlamaIndex | Multi-step AI workflows, RAG pipelines |

| Vector DB | Pinecone, pgvector, Weaviate | RAG, semantic search, embeddings |

| App Database | PostgreSQL (Neon, Supabase) | Application data, user data, metadata |

| Auth | Clerk, BetterAuth, Supabase Auth | User authentication, session management |

| Deployment | Vercel, Railway, AWS | Hosting, CI/CD, infrastructure |

| Monitoring | Sentry, PostHog, LangSmith | Error tracking, analytics, LLM observability |

Step 4: Build the Core AI Feature

This is where most founders make the mistake that kills their MVP: they build the product around the AI instead of building the AI into the product.

Your users do not care about your model architecture, your prompt engineering, or your retrieval pipeline. They care about the outcome: the answered question, the generated document, the classified image, the automated workflow. Build the user experience first, then connect the AI capability behind it.

The build order that works:

-

Build the interface for the core user action. If your MVP is an AI document analyzer, build the upload page and results display first. Use mock data. Make sure the user flow is intuitive before the AI layer exists.

-

Connect the AI API and build the processing pipeline. Wire up the LLM or ML model. Build the prompt templates (for LLM products) or the inference pipeline (for ML products). Implement basic error handling. What happens when the model returns garbage?

-

Add guardrails and evaluation. AI outputs are probabilistic. Your MVP needs: input validation (reject inputs the model cannot handle), output validation (check for hallucinations, format compliance, safety), fallback behavior (what the user sees when the AI fails), and basic logging (track inputs, outputs, and latency for every request so you can measure quality).

-

Build the feedback mechanism. Your MVP must capture user reactions to AI outputs. Thumbs up/down, edit tracking, explicit corrections. Any signal that tells you whether the AI is producing useful results. This data is your most valuable asset. It tells you where the model works, where it fails, and what to improve first.

Do not spend time on user authentication, billing, admin dashboards, or analytics in the first build. Those come after you validate that the core AI feature works and users want it.

Step 5: Wrap It in a Usable Product

The AI feature alone is not a product. Users need to discover it, understand it, use it repeatedly, and eventually pay for it. This step turns your AI capability into something that looks and feels like a product.

Minimum product wrapper for an AI MVP:

- Onboarding flow. Two screens maximum. Explain what the product does, collect the minimum information needed (company name, use case), and get the user to the core AI feature in under 60 seconds.

- Core feature interface. The screen where the AI happens. Clean, focused, one primary action. No feature menus with grayed-out “coming soon” items.

- Results display. How the AI output is presented to the user. This is where most of your UX effort should go. A perfectly accurate AI model with a confusing results page will still feel broken to users.

- Error states. What the user sees when the AI fails, takes too long, or produces low-confidence results. “Something went wrong” is not acceptable. “We could not find a clear answer in your document. Here are the three most relevant sections. Can you clarify which one you need?” is an error state that maintains trust.

- Basic authentication. Email and password or Google OAuth. Do not build role-based access, team management, or SSO for an MVP.

Step 6: Test, Validate, Iterate

Your AI MVP is built. Now you need to answer the question it was designed to answer: does this AI capability solve a real problem for real users?

What to measure:

- Task completion rate. What percentage of users who start the core action complete it successfully? If users upload a document but never act on the analysis, the AI output is not useful enough.

- AI accuracy (user-perceived). Track the thumbs up/down ratio, the edit rate, and the explicit correction rate on AI outputs. Aggregate accuracy from your evaluation dataset matters less than whether individual users find individual outputs helpful.

- Retention. Do users come back? A user who tries your AI document analyzer once is curious. A user who uploads documents every week has a workflow dependency. That is product-market fit signal.

- Time-to-value. How long from signup to the first “aha” moment? For AI products, this should be under 5 minutes. If users need 30 minutes of setup before they see AI value, your onboarding is the bottleneck.

- Unit economics. What does it cost to serve each user? Track API costs per request, infrastructure costs per user, and support costs. If your AI costs $0.50 per query and users average 100 queries per month, your per-user cost is $50/month. Your pricing must account for this.

Run the MVP with 20-50 target users for 2-4 weeks. Collect quantitative data (the metrics above) and qualitative feedback (user interviews, support tickets, feature requests). The data tells you one of three things: iterate on the current approach, pivot the value proposition, or scale what works. For a structured approach to AI idea validation before building, see our guide to validating AI startup ideas.

AI MVP Cost Factors

AI MVP development costs range from $10,000 to $100,000 depending on complexity, architecture, and team structure. That range is wide because an AI chatbot MVP built on OpenAI’s API is a fundamentally different project than a custom computer vision MVP that requires model training.

Here is how costs break down by component.

| Cost Component | Simple AI MVP | Moderate AI MVP | Complex AI MVP |

|---|---|---|---|

| Architecture | LLM API calls | RAG + LLM | Custom ML + LLM |

| AI layer | $2,000-$5,000 | $8,000-$20,000 | $25,000-$50,000 |

| Application development | $5,000-$10,000 | $10,000-$25,000 | $20,000-$40,000 |

| Data preparation | $0-$2,000 | $3,000-$8,000 | $10,000-$25,000 |

| Infrastructure (first 3 months) | $500-$2,000 | $2,000-$5,000 | $5,000-$15,000 |

| Total range | $8,000-$20,000 | $25,000-$60,000 | $60,000-$130,000 |

| Timeline | 2-4 weeks | 4-8 weeks | 8-16 weeks |

GenAI features (RAG, copilots, AI agents) add 15-30% to budgets for data preparation, evaluation frameworks, and guardrails (Ideas2It, 2026). Hidden costs including model API usage, compliance requirements, and post-launch iteration typically add another 10-30%.

The biggest cost variable is not the AI itself. It is how much custom application development wraps around it. An AI MVP with a minimal chat interface costs a fraction of an AI MVP with a full dashboard, team management, integrations, and reporting.

For a complete cost breakdown with budget templates and cost optimization strategies, read our AI MVP cost breakdown guide.

Common AI MVP Examples

Not every AI MVP looks the same. Here are four common patterns we build at Downshift, each with a different architecture, cost profile, and timeline.

AI Chatbot MVP

What it does: A conversational AI agent that answers questions, handles support requests, or guides users through a workflow using natural language.

Architecture: LLM API (GPT-4o or Claude) with RAG if the chatbot needs to answer from specific company knowledge.

Real example pattern: A fintech startup builds a chatbot that answers customer questions about loan products using the company’s product documentation as the knowledge base. Users type questions in plain English; the chatbot retrieves relevant documentation, generates an answer, and cites the source.

MVP timeline: 2-4 weeks. Cost: $8,000-$25,000. Key risk: Hallucination. The chatbot invents information not in the knowledge base. Mitigate with source citations and confidence thresholds.

AI Recommendation Engine MVP

What it does: Analyzes user behavior, preferences, or input data to suggest relevant items: products, content, connections, or actions.

Architecture: Can range from collaborative filtering (traditional ML) to LLM-powered semantic matching, depending on data availability.

Real example pattern: An e-commerce startup builds a recommendation engine that suggests products based on browsing history and purchase patterns. The MVP uses a simple collaborative filtering model, and users see a “Recommended for You” section on the homepage.

MVP timeline: 4-6 weeks. Cost: $20,000-$40,000. Key risk: Cold start problem. New users have no behavior data. Mitigate with onboarding questions or content-based initial recommendations.

AI Document Processing MVP

What it does: Extracts, classifies, or summarizes information from unstructured documents: contracts, invoices, medical records, legal filings.

Architecture: LLM with structured output parsing. For high-volume processing, add a document preprocessing pipeline (OCR, chunking, metadata extraction).

Real example pattern: A legal tech startup builds a tool that extracts key terms from commercial leases: rent amounts, termination clauses, renewal dates. Lawyers upload PDFs; the tool returns a structured summary with page references.

MVP timeline: 3-6 weeks. Cost: $15,000-$40,000. Key risk: Accuracy on messy documents. Scanned PDFs, inconsistent formatting, handwritten annotations. Mitigate by constraining the MVP to clean digital documents and expanding format support after validation.

AI Analytics Dashboard MVP

What it does: Uses AI to analyze datasets and surface insights, anomalies, or predictions that would take a human analyst hours to find.

Architecture: LLM for natural language querying of data, plus traditional analytics/visualization on the frontend.

Real example pattern: A SaaS startup builds a dashboard where users ask questions about their business data in plain English (“What caused the revenue drop last Tuesday?”) and the AI queries the database, runs analysis, and presents the answer with charts.

MVP timeline: 4-8 weeks. Cost: $25,000-$50,000. Key risk: Query accuracy. The AI misinterprets the user’s question and returns wrong data. Mitigate by showing the generated SQL query and letting users verify before executing.

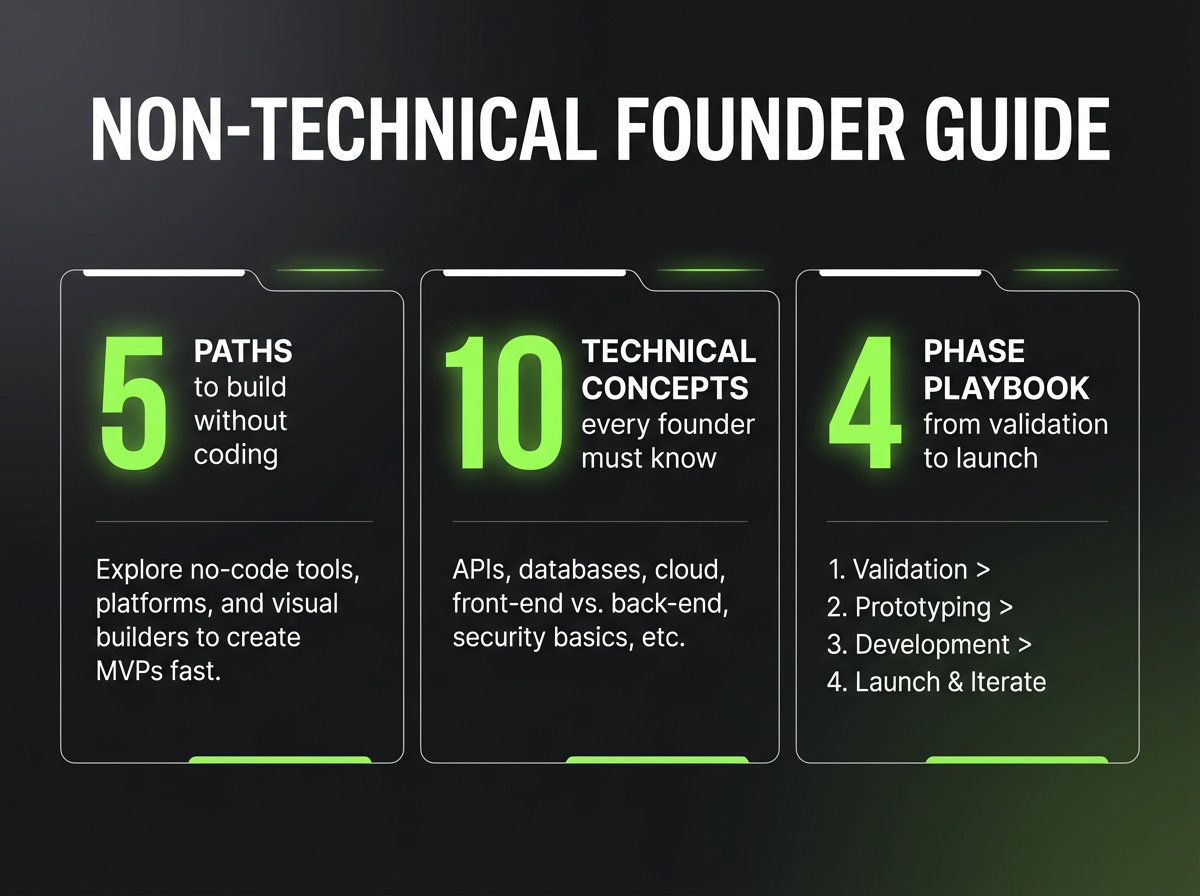

How to Build Your AI MVP: DIY vs. Partner

The options have changed. In 2024, non-technical founders had two options: learn to code or hire someone. In 2026, AI-powered development tools like Lovable, Bolt, Cursor, and v0.dev have created a genuine third path, building it yourself with AI assistance.

The right approach depends on the complexity of your AI product, not on whether you can code.

Path 1: Build It Yourself with AI Tools

When this works: Your MVP is primarily a user interface that calls an LLM API. Think chatbots, content generation tools, simple document processing, or AI-enhanced forms. The AI logic is straightforward: send a prompt, get a response, display it.

Tools that make this possible: Lovable and Bolt can generate full-stack applications from natural language descriptions. Cursor and Windsurf turn you into a developer by writing code alongside you. v0.dev generates React components. Combine these with OpenAI or Anthropic’s APIs and you can ship a functional AI MVP in days, not weeks.

Where it breaks down: When your AI product requires RAG pipelines with vector databases, custom model training, complex multi-step agent workflows, real-time data processing, or enterprise-grade security and compliance. These tools generate application code well, but AI infrastructure (retrieval pipelines, evaluation frameworks, guardrails, model orchestration) still requires experience to get right. The difference between a demo that works in a pitch meeting and a product that works reliably for paying users is often invisible until it breaks.

Cost: $0-$500/month in tool subscriptions plus API costs. Your investment is time, not money.

Path 2: Work with a Technical Partner

When this makes sense: Your AI product involves custom data pipelines, needs to handle sensitive data with compliance requirements, requires multi-model orchestration, or the AI layer is the core differentiator (not just a feature). Also when speed matters. A technical partner who has built similar products before will avoid the dead ends that cost solo builders weeks.

If you go this route, here is what to look for.

AI-specific experience, not just software development. Building AI products requires skills that most software developers do not have: prompt engineering, model evaluation, retrieval pipeline design, handling probabilistic outputs, managing API costs. Ask for specific AI projects they have built. Not “We are working on AI capabilities” but “Here is an AI product we shipped, here is the architecture, here is how it performed.” If you need broader technical leadership alongside the build, a technical co-founder service provides both the AI expertise and the strategic guidance.

Fixed-scope pricing with clear deliverables. Avoid hourly billing for MVP development. You need a fixed price for a defined scope: a specific set of features, a specific timeline, a specific deliverable. Hourly billing creates incentives to expand scope and extend timelines. Ask: “What exactly will I have at the end of this engagement, and what will it cost?”

Production-ready code, not demo code. Many AI development shops build impressive demos that collapse under real usage. Ask about error handling, monitoring, testing, and deployment. Demo code and production code are different disciplines.

Transition planning from day one. What happens after the MVP is built? Can you hire your own developers to maintain and extend it? Is the code documented? Is the architecture standard enough that another team can pick it up? A good partner builds for your independence, not your dependency.

Path 3: Hybrid. Start Solo, Bring In Help for the Hard Parts

This is increasingly the most common path. Build the application layer yourself using AI coding tools. Bring in a technical partner specifically for the AI infrastructure: the retrieval pipeline, the evaluation framework, the model orchestration, the production hardening. You control the product and the budget. The partner handles the parts that require deep AI engineering experience.

Red Flags (For Any Partner)

- “We can build anything with AI.” No, they cannot. AI has specific strengths and real limitations. A partner who does not discuss limitations is selling, not advising.

- No previous AI projects they can reference. AI MVP development is a specific skill set. General software development experience is necessary but not sufficient.

- Hourly billing with no scope definition. This is how $30,000 projects become $90,000 projects.

- They do not ask about your users. A partner who starts with technology instead of the problem your users face will build a technically interesting product that nobody uses.

- No discussion of AI model evaluation or accuracy metrics. If they do not talk about how to measure whether the AI is working, they are building a demo, not an MVP.

For a ranked list of top development partners, see our best AI MVP development companies comparison.

AI MVP Mistakes to Avoid

After building AI MVPs across fintech, healthcare, education, and consumer, we see the same mistakes repeatedly. Each one is preventable.

1. Building a “thin wrapper” with no defensible value. The most common AI startup failure in 2026 is building a product that is just a user interface on top of an API call to OpenAI or Anthropic. These products have zero defensibility. Anyone can replicate them in days. Your AI MVP must prove that your product adds value beyond what the raw API provides. That value comes from proprietary data, a specialized workflow, domain expertise encoded in the system, or a user experience optimized for a specific job.

2. Overbuilding the first version. Feature creep is the second-most common MVP killer. Your first version needs one core AI feature that works well. Not three AI features that work poorly. Not one AI feature plus user management, billing, reporting, integrations, and an admin dashboard. Scope expansion is how 4-week projects become 4-month projects, and 4-month projects run out of money before they find users.

3. Ignoring data quality from the start. Poor data quality causes up to 60% of AI project failures (Gartner, 2025). For RAG-based MVPs, this means your knowledge base must be curated, current, and correctly chunked. For ML-based MVPs, this means your training data must be representative, labeled correctly, and large enough to be statistically meaningful. Garbage data in produces garbage AI outputs. No model architecture fixes bad inputs.

4. Skipping AI evaluation frameworks. If you cannot measure whether your AI is producing good outputs, you cannot improve it. Build evaluation into the MVP from day one: automated accuracy metrics, user feedback capture, output logging, and A/B testing infrastructure. The startups that iterate fastest are the ones that know exactly where their AI fails.

5. Mispricing because you ignored API costs. OpenAI charges per token. Anthropic charges per token. Every AI query has a cost. If your product encourages heavy usage (long conversations, large document processing, frequent queries), your per-user cost can be substantial. We have seen startups price at $29/month for a product that costs $45/month per user to serve. Run the unit economics before you set pricing, not after.

6. Confusing a demo with validation. A demo that impresses investors in a meeting is not validation. Validation means real users with real workflows using your product repeatedly and getting measurable value from it. The AI startup mistakes that kill companies are usually not technical. They are founders who mistake enthusiasm for demand.

7. Building custom models when APIs will do. Training a custom model requires data, compute, expertise, and time. For most MVP use cases, a well-prompted foundation model API produces results that are good enough to validate the market. Custom models are an optimization. Build them after you have proven the market, not before. MIT estimates that 95% of generative AI pilots fail to reach production (Mind the Product, 2025), and overengineering is a primary contributor.

FAQ

How much does it cost to build an AI MVP?

AI MVP development costs range from $8,000 to $130,000 depending on complexity. A simple LLM-based chatbot MVP runs $8,000-$20,000. A moderate RAG-based application runs $25,000-$60,000. Complex MVPs with custom ML models run $60,000-$130,000. These ranges include AI layer development, application development, data preparation, and initial infrastructure costs. API usage costs ($500-$5,000/month) are additional and ongoing.

How long does it take to build an AI MVP?

Timelines range from 2 to 16 weeks depending on architecture complexity. LLM API-based MVPs (chatbots, content tools) take 2-4 weeks. RAG-based applications take 3-6 weeks. MVPs requiring custom ML model training take 8-16 weeks. AI-assisted development tools have compressed these timelines by 40-60% compared to 2023 baselines, but AI-specific requirements (data preparation, evaluation, guardrails) still add time that traditional MVPs do not require.

What features should an AI MVP include?

The minimum feature set for an AI MVP includes: one core AI-powered feature (the thing that proves your value proposition), a simple user interface for the core action, input validation and error handling, output quality feedback mechanism (thumbs up/down), basic authentication, and request logging for AI observability. Do not include: multiple AI features, admin dashboards, billing systems, advanced analytics, team management, or integrations. Those come after validation.

How do I build an AI product with no coding experience?

The options have shifted dramatically. AI-powered development tools like Lovable, Bolt, and Cursor now let non-technical founders build functional applications, including AI-powered ones, without traditional coding skills. For simpler AI products (chatbots, content tools, LLM wrappers), you can genuinely build your own MVP using these tools plus an LLM API like OpenAI or Anthropic. For more complex AI products that require custom data pipelines, RAG infrastructure, or multi-model orchestration, you will likely need a technical partner, either a technical co-founder or an AI development partner. The hybrid approach is increasingly common: build the application layer yourself, bring in expertise for the AI infrastructure.

What is the difference between an AI prototype and an AI MVP?

An AI prototype demonstrates that an AI capability works technically. It answers: “Can AI do this?” An AI MVP demonstrates that users want an AI product that does this. It answers: “Will people use and pay for this?” Prototypes are internal tools. They prove feasibility to your team. MVPs are external products. They prove market demand to users and investors. An AI prototype might be a Jupyter notebook showing your model’s accuracy on a test dataset. An AI MVP is a deployed web application where users upload documents and get AI-generated analyses. Build the prototype first (days to 1 week), then the MVP (2-8 weeks).

What tech stack should I use for an AI MVP?

For most AI MVPs in 2026: Next.js or React (frontend), Node.js or Python FastAPI (backend), OpenAI or Anthropic API (LLM layer), LangChain or LlamaIndex (AI orchestration), PostgreSQL (database), and Vercel or Railway (deployment). Add pgvector or Pinecone for RAG-based products. Add Hugging Face and PyTorch for custom ML. Use Redis for caching API responses to reduce costs. See our tech stack recommendations table above for a complete breakdown by component.

How do I validate my AI startup idea before building an MVP?

Validate in three stages. First, problem validation: interview 15-30 potential users about their current workflow. Do they have the problem you think they have? How do they solve it today? How much time/money does it cost them? Second, solution validation: build a prototype (not an MVP) that demonstrates the AI capability. Show it to potential users and gauge reaction. Not “Is this cool?” but “Would you use this every week?” Third, willingness-to-pay validation: describe the product and a price point. Would they pay? How much? At what frequency? Only after all three stages confirm demand should you invest in building the full AI MVP.

What are the most common AI MVP mistakes?

The seven most common mistakes are: building a thin wrapper with no defensible value, overbuilding the first version, ignoring data quality, skipping AI evaluation frameworks, mispricing due to uncalculated API costs, confusing a demo with market validation, and training custom models when API calls would suffice. See the mistakes section above for detailed explanations and prevention strategies for each.

Build Your AI MVP the Right Way

The AI market is not slowing down. $202 billion in funding in 2025 signals that investors, enterprises, and users all want AI products. The question is not whether to build. It is how to build smart enough to avoid the 90% failure rate.

An AI MVP is how you answer that question with evidence instead of assumptions. Define the value proposition. Choose the right architecture. Build the core feature. Validate with real users. Iterate on what the data tells you.

The founders who ship AI MVPs in weeks, not months, get to market faster, spend less, learn more, and raise capital on traction instead of promises. Whether you build it yourself with AI coding tools, bring in a technical partner, or combine both approaches, the framework is the same: start with the problem, build the minimum AI capability that solves it, and let user behavior tell you what to build next.

Need help with the hard parts? Book a free discovery call. 30 minutes, no pitch. We will walk through your AI product idea, assess what you can build yourself versus where you need technical depth, and map out a plan that fits your budget and timeline.