Most AI MVPs fail because founders build the product shell first and bolt on the AI later. That is backwards. The AI is the product. If the AI does not work, nothing else matters. Not your landing page, not your onboarding flow, not your Stripe integration.

We have worked with AI startups across fintech, healthcare, education, and consumer at Downshift, sometimes building alongside founders who are using Lovable, Cursor, or v0.dev for the frontend, sometimes handling the full build. The pattern that works is always the same: prove the AI first, wrap the product around it second, ship it in weeks instead of months. This is the exact process.

What Makes Building an AI MVP Different

Traditional MVPs are deterministic. A user clicks a button, the app does the thing. Every time. AI MVPs are probabilistic. A user submits a query, and the model gives a different answer depending on context, phrasing, and which way the temperature parameter leans that second.

That single difference changes three things about how you build.

You cannot spec the output. In a traditional app, you write acceptance criteria: “When user clicks Submit, order is created.” In an AI app, the output is variable. You define quality ranges, not exact outputs. Your MVP needs evaluation criteria from day one, not after launch.

Data is a prerequisite, not a byproduct. Traditional MVPs launch with empty databases. Users fill them. AI MVPs often need data before the product works at all. A RAG-based chatbot needs a knowledge base. A classification tool needs labeled examples. This front-loads your timeline.

Costs scale with usage, not just users. Each API call to GPT-4o or Claude costs money. A power user who sends 200 queries per day costs you 40x more than a casual user who sends 5. Your MVP must validate unit economics alongside product-market fit.

These differences are why generic MVP development advice fails for AI products. You need a process built specifically for probabilistic systems.

Before You Start: AI MVP Pre-Flight Checklist

Do not write a single line of code until you have answered three questions. Skipping this step is why founders burn $50,000 and three months building something nobody needs.

Validate the AI Hypothesis

Your AI hypothesis is not “we will use AI.” It is a specific claim: “AI can do X better, faster, or cheaper than the current method.” Before building, pressure-test that claim.

- Can a human do this task today? If yes, how long does it take and what does it cost? That is your benchmark.

- Does AI actually improve the outcome? Run the test manually. Take 20 real inputs, feed them to ChatGPT or Claude through the API, and grade the outputs. If the AI gets 6 out of 10 right, you know the quality floor before you build anything.

- Would users pay for this improvement? Talk to 10 potential customers. Not “would you use an AI tool that does X?” That question always gets a yes. Instead: “How much time do you spend on X per week? What would you pay to cut that in half?”

Define Your Data Strategy

Every AI MVP needs data. The question is how much and where it comes from.

- LLM-only apps (chatbots, content generators, summarizers): You may need zero proprietary data. The model’s training data is your starting point. You add domain specificity through prompts and retrieval.

- RAG apps (knowledge bases, document Q&A, support bots): You need a curated corpus. Plan for 2-4 weeks of data collection and structuring before the AI works well.

- Custom model apps (classification, prediction, anomaly detection): You need labeled training data. Hundreds to thousands of examples. This is the longest data runway.

Know which category your product falls into before you choose architecture.

Choose Build vs Buy for AI Components

Not every AI feature needs to be custom. Here is the decision:

- Buy (use an API): When the AI capability is general-purpose. Summarization, translation, entity extraction, conversational AI. GPT-4o, Claude, and Gemini handle these well out of the box.

- Build (train or fine-tune): When the AI capability is your competitive advantage. Proprietary scoring algorithms, domain-specific classification, custom recommendation engines.

- Hybrid: Buy the foundation model, build the domain layer. This is where 80% of AI MVPs land. You use an LLM API for the heavy lifting and add retrieval, guardrails, and post-processing that make it yours.

Step 1: Define the Problem and AI Value Proposition

Write one sentence that describes what your AI does and why it matters. Not a tagline. A functional statement.

Bad: “We use AI to help businesses make better decisions.”

Good: “We analyze SEC filings in 30 seconds and flag material risk factors that take a compliance analyst 4 hours to find manually.”

The good version has four elements: what it does (analyze SEC filings), how fast (30 seconds), what problem it solves (flagging risk factors), and what it replaces (4 hours of manual analyst work). If you cannot write this sentence, you do not have a product yet. You have a technology looking for a problem.

Your value proposition shapes every downstream decision. It tells you what to build first, what accuracy threshold matters, and what “good enough” looks like for your MVP.

Step 2: Choose Your AI Architecture

Your architecture decision locks in your cost structure, timeline, and technical ceiling. There are three paths.

LLM-Based (ChatGPT, Claude, Gemini APIs)

Best for: Conversational products, content generation, document analysis, search, summarization.

You send prompts to a hosted model and get responses back. No training, no infrastructure, no GPU costs. You pay per token. Time to first working prototype: days, not weeks.

The tradeoff is control. You are renting intelligence from OpenAI, Anthropic, or Google. Model behavior changes when they update. Pricing changes when they decide it changes. Your product’s core capability lives on someone else’s servers.

Practical tip: Start with the cheapest model that meets your quality bar. Most founders jump straight to GPT-4o or Claude Opus. For many use cases, GPT-4o-mini or Claude Haiku gets you 85% of the quality at 10% of the cost. Test cheap first. Upgrade only where quality demands it.

Custom ML Model

Best for: Classification, prediction, anomaly detection, recommendation engines. Tasks where you have proprietary data and the model IS the moat.

You train a model on your own data. You own the weights. Nobody else has your model. The tradeoff is time and expertise. Training a custom model requires ML engineering talent, labeled data, and weeks of iteration.

Practical tip: Unless ML is your core product, do not start here. Build your MVP on an LLM API, validate the market, then invest in custom models once you have revenue and data.

Hybrid Approach

Best for: 80% of AI MVPs. You use an LLM for language understanding and generation, then add your own retrieval layer, business logic, and post-processing.

RAG (Retrieval-Augmented Generation) is the most common hybrid pattern. Your app retrieves relevant documents from a vector database, feeds them to an LLM as context, and the model generates a response grounded in your data. This gives you LLM-level language ability with domain-specific accuracy.

Practical tip: Pinecone, Weaviate, and pgvector are the three vector database options worth evaluating for an MVP. pgvector wins if you are already using PostgreSQL. One less service to manage.

Step 3: Select Your Tech Stack

Pick boring, proven technology for everything except the AI layer. Your stack should look something like this:

| Layer | Recommended Tools | Why |

|---|---|---|

| AI/LLM | OpenAI API, Anthropic API, or Google Gemini | Fastest path to working AI. Switch later if needed. |

| Backend | Node.js (Express/Fastify) or Python (FastAPI) | Large talent pools. Extensive AI library support. |

| Database | PostgreSQL + pgvector | One database for structured data AND vector search. |

| Frontend | Next.js or React + Vite | Fast to build. Easy to iterate. |

| Auth | Clerk, Supabase Auth, or BetterAuth | Do not build auth. Buy it. |

| Hosting | Vercel, Railway, or AWS | Railway for backend simplicity. Vercel for Next.js. |

| Queue/Jobs | BullMQ (Redis-backed) | AI calls are slow. Async processing is mandatory. |

Two rules: do not build what you can buy, and do not optimize what you have not validated. The tech stack exists to get your AI in front of users. Nothing more.

Step 4: Build the Core AI Feature First

This is where most founders go wrong. They build login, onboarding, settings, billing, a dashboard, and the AI feature is the last thing they wire up. Invert this.

The AI-First Development Approach

Week 1: Get the AI working in isolation. A script, a notebook, a CLI tool. Feed it real inputs, evaluate the outputs. No UI. No database. Just the AI doing its job.

Week 2: Wrap it in a minimal API. One endpoint that accepts input and returns the AI’s output. Test it with Postman or curl.

Week 3: Build the thinnest possible UI around that API. An input field, a submit button, and an output display. Ugly is fine. Functional is mandatory.

This sequence forces you to confront the hardest problem first. If the AI does not produce useful output, you find out in week 1, not week 6 after building an entire product around a capability that does not work.

Handling AI Uncertainty in UX

AI outputs are not always right. Your UX must account for this honestly.

- Show confidence levels when the model provides them. “High confidence” vs “This may need review” sets user expectations correctly.

- Make outputs editable. Let users correct the AI. This serves two purposes: it fixes errors in real time, and it generates training data for improving the model later.

- Provide source attribution. If your AI pulls from documents, show which documents. Users trust AI more when they can verify the source.

- Design for failure. What does the user see when the AI gives a bad answer? If the answer is “nothing” or “a spinner that never stops,” you have not thought about this enough.

Step 5: Wrap It in a Product Shell

Now, and only now, build the product around your validated AI feature.

The product shell for an MVP is thin. It includes:

- Authentication. Use Clerk, Supabase Auth, or BetterAuth. Do not build it yourself. Do not spend more than a day on this.

- A single workflow. The user lands, completes one task with the AI, and gets value. That is the entire product for v1. One workflow. One job to be done.

- Usage tracking. You need to know what users do. Not analytics dashboards, just event logging. Who submitted what, what the AI returned, and whether the user accepted or modified the output.

- Error handling. AI APIs go down. Models time out. Rate limits hit. Your product shell catches these failures gracefully instead of showing a white screen.

What the product shell does NOT include for an MVP: billing, admin panels, team features, integrations, notification systems, multiple AI features. All of those come after validation.

Step 6: Test with Real Users

Ship the MVP to 10-20 users in your target market. Not friends. Not other founders. Actual potential customers who have the problem you are solving.

AI-Specific Testing Challenges

Testing AI products is different from testing traditional software because the same input can produce different outputs. You cannot write a test that says “input X must produce output Y.” Instead, you test for quality ranges.

- Run 50-100 test queries across your core use case. Grade each output on a 1-5 scale. Your quality floor for launch should be 3.5+ average with zero 1-ratings.

- Test edge cases manually. What happens with empty inputs? Extremely long inputs? Inputs in the wrong language? Adversarial inputs designed to break the model?

- Measure latency. Users expect sub-3-second responses for conversational AI. If your RAG pipeline takes 8 seconds, that is a UX problem you must solve before launch.

Evaluating AI Output Quality

Build a simple evaluation framework before you launch. It does not need to be sophisticated.

- Create a test set of 50 representative inputs with expert-graded ideal outputs.

- Run your AI against the test set weekly.

- Track accuracy, relevance, and completeness scores over time.

- Set a quality threshold. Below the threshold, you iterate on prompts and retrieval. Above it, you ship.

This evaluation framework becomes your quality moat as you grow. Competitors who launch without one cannot systematically improve. You can.

Step 7: Launch and Iterate

Your AI MVP launch is not a press event. It is a controlled experiment.

Week 1-2 post-launch: Watch everything. Monitor error rates, API costs, response quality, and user behavior. Talk to every user. Ask what surprised them, what frustrated them, and what they expected the AI to do that it did not.

Week 3-4: Fix the top 3 issues users report. This is almost always prompt engineering, not code changes. Adjust your system prompts, add examples to your retrieval corpus, tighten your guardrails.

Month 2: Decide whether to double down or pivot. You have enough data. Users either come back and use the AI repeatedly (signal), or they try it once and disappear (noise). If retention is below 20% weekly, the AI is not solving a real problem. Change the approach or change the problem.

The iteration cycle for AI products is faster than traditional products because your improvements come from better prompts, better retrieval, and better data, not from shipping new features.

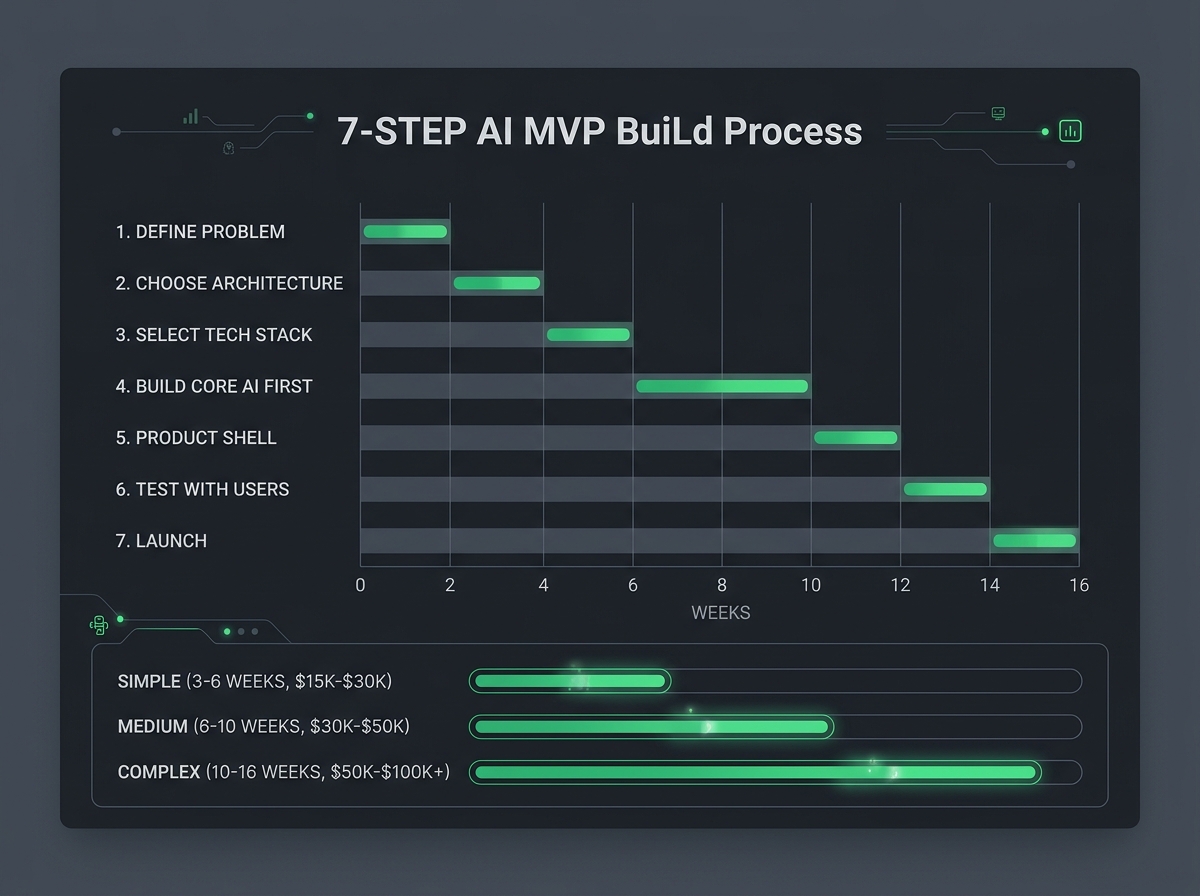

AI MVP Timeline: How Long Does It Take?

Timelines depend on complexity. Here is what we see across real projects:

| Complexity | Examples | Timeline | Estimated Cost |

|---|---|---|---|

| Simple | LLM chatbot, content generator, document summarizer | 3-6 weeks | $15,000-$30,000 |

| Medium | RAG knowledge base, multi-step AI workflows, AI-powered search | 6-10 weeks | $30,000-$50,000 |

| Complex | Custom ML models, multi-modal AI, real-time data pipelines | 10-16 weeks | $50,000-$100,000+ |

Most startups land in the Medium category. A RAG-based product with a clean UI, authentication, and basic usage tracking takes 6-10 weeks to build properly.

These timelines assume a small, experienced team. A solo founder using AI coding tools (Lovable, Cursor, v0.dev) can build the frontend and basic workflows faster than ever, but the AI infrastructure and production hardening still takes experienced hands. This is where a collaborative technical partner can compress the timeline, handling architecture, AI infrastructure, and scaling while you build everything else.

FAQ

How long does it take to build an AI MVP?

3-16 weeks depending on complexity. A simple LLM-based chatbot or content tool takes 3-6 weeks. A RAG-powered knowledge base or multi-step AI workflow takes 6-10 weeks. Products requiring custom ML models or real-time data processing take 10-16 weeks. These timelines assume a team with AI product experience.

What should an AI MVP include?

One core AI feature that solves a specific problem, authentication, a single user workflow, usage tracking, error handling, and nothing else. The goal is to validate that the AI delivers value to real users. Features like billing, team management, integrations, and admin tools come after validation.

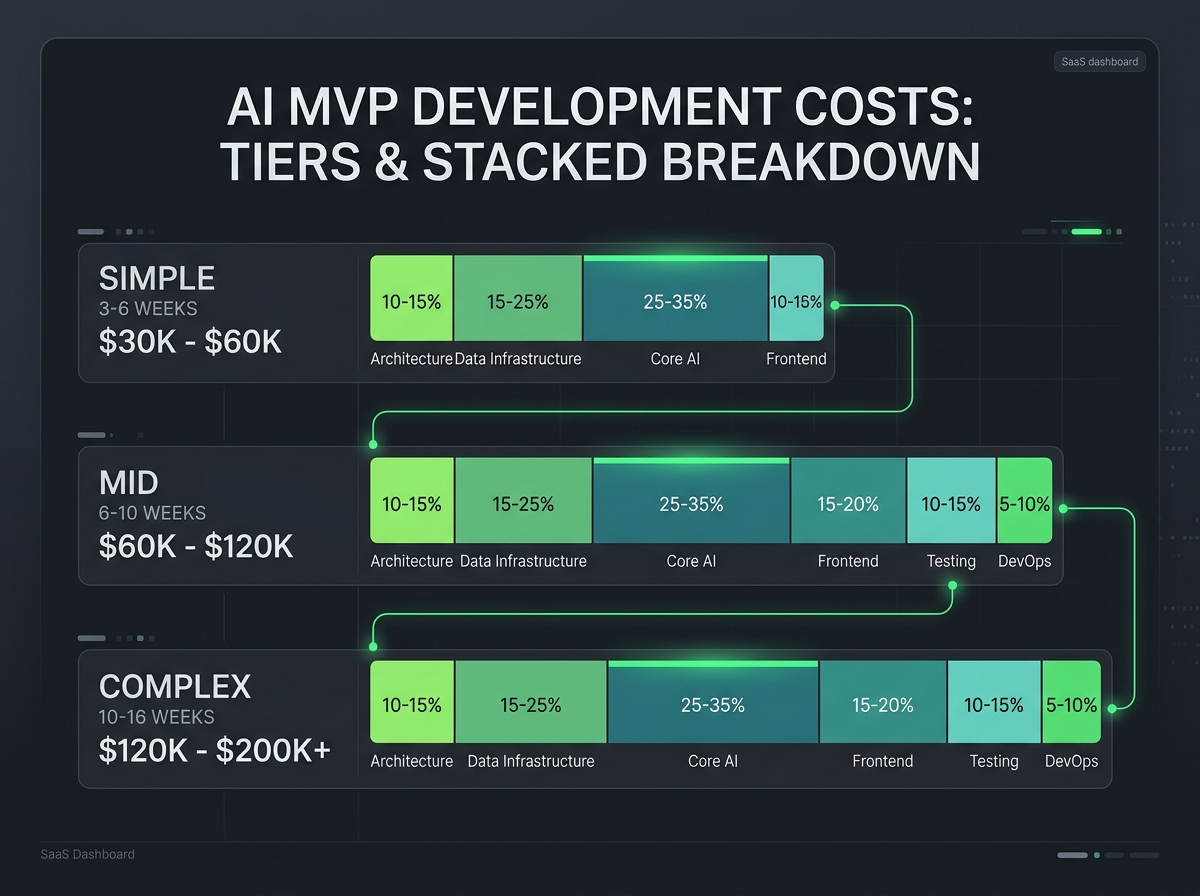

How much does an AI MVP cost?

$15,000-$100,000+ depending on complexity. Simple LLM API integrations start around $15,000-$30,000. Mid-complexity RAG applications run $30,000-$50,000. Complex custom ML projects exceed $50,000. The biggest variable is whether you use pre-trained models (cheaper, faster) or train custom models (expensive, slower). Read our complete AI MVP cost breakdown for detailed pricing across different architectures.

Can I build an AI MVP with no-code tools?

More than ever. In 2026, AI coding tools like Lovable, Bolt, Cursor, and v0.dev let founders build functional products, not just prototypes. You can build the frontend, basic workflows, integrations, and even simple AI features yourself. Where these tools hit a ceiling: complex AI infrastructure, custom prompt chains with guardrails, vector search tuning, production hardening, scaling, and security. Start building with AI tools from day one. Bring in expert help for the genuinely hard infrastructure and architecture pieces when you need it.

What is the difference between an AI prototype and an MVP?

A prototype proves the AI can do something. An MVP proves users will pay for it. A prototype might be a Jupyter notebook that summarizes documents. An MVP is a web app where users log in, upload documents, get summaries, and come back to do it again. The prototype validates technology. The MVP validates the business.

Start Building

The best AI MVPs follow the same pattern: validate the AI hypothesis, build the core AI feature first, wrap it in the thinnest possible product shell, and ship to real users in weeks.

The founders who struggle are the ones who build the product first and hope the AI works. The founders who win are the ones who prove the AI first and build the product around it.

If you are a founder with an AI product idea, start building today. Use Lovable, Bolt, Cursor, or v0.dev for the frontend and basic workflows. When you hit the hard parts (AI infrastructure, architecture, production hardening, scaling, security), Downshift works alongside you to get those pieces right.